Yiorgos MakrisProfessor

|

|

Research Interests (Update Coming Soon)

Current Focus, Earlier Interests

Current Focus:

Earlier Interests:

Hardware Security and Trustworthiness

(Funded by NSF, ARO, and SRC)

Partly because of design outsourcing and migration of fabrication to low-cost areas across the globe, and partly because of increased reliance on third-party intellectual property and design automation software, the integrated circuit supply chain is now considered far more vulnerable than ever before. Among the various threats and concerns, our research was initially focused on developing a method for hardware Trojan detection through Statistical Side-Channel Fingerprinting in order to enhance security and trustworthiness of digital integrated circuits and systems. We then became particularly interested in Hardware Trojans in Wireless Cryptographic ICs, since such ICs constitute a prime attack candidate due to their ability to communicate encrypted data over public channels. In addition to hardware Trojans, we are interested in developing statistical solutions towards Counterfeit IC Identification. Furthermore, we are also investigating methods for proving trustworthiness of third-party intellectual property through the use of Proof-Carrying Code principles and the development of a Proof-Carryng Hardware Intellectual Property (PCHIP) framework.

Statistical Side-Channel Fingerprinting

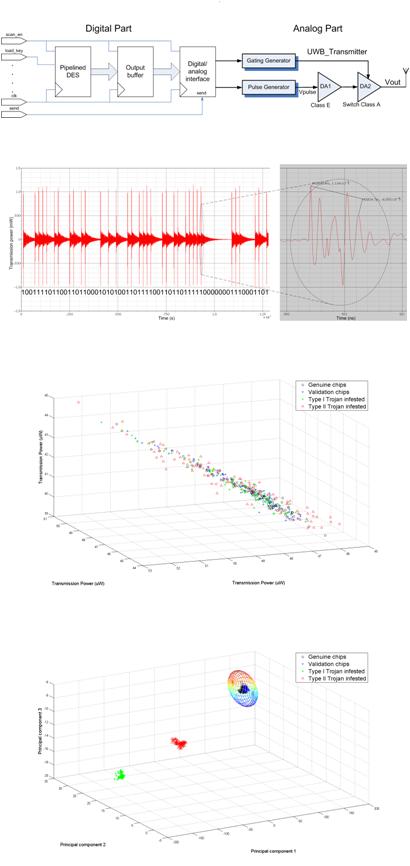

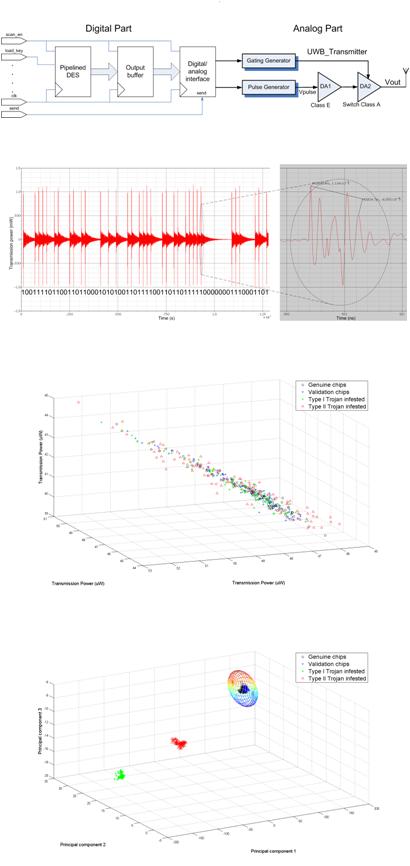

Malicious modifications, known as hardware Trojans, may provide additional functionality which is unknown to the designer and user of an IC, but which can be exploited by a perpetrator after deployment to sabotage or incapacitate a chip, or to steal sensitive information. Towards detecting such hardware Trojans, my group contributed a key observation: while a carefully crafted hardware Trojan can evade detection by typical industrial test methods, it will distort the parametric space of an IC (e.g., power consumption, path delay, operating frequency, supply current, etc.). Therefore, a well-chosen set of parametric measurements can serve as a fingerprint which can be combined with powerful statistical analysis methods to distinguish Trojan-free from Trojan-infested ICs, as we demonstrated in various hardware-Trojan types. Our statistical side-channel fingerprinting paradigm has since been extended by a large body of work, employing multiple parametric measurements and various statistical methods to enhance detection resolution, thereby corroborating our original observation. Recently, we also contributed a solution to a key limitation of statistical side-channel fingerprinting, namely its reliance on availability of a small set of Trojan-free ICs. Specifically, we demonstrated that equal effectiveness may be achieved through a trusted simulation model alongside trusted on-chip process control monitors, leading to golden-chip free hardware Trojan detection.

Hardware Trojans in Wireless Cryptographic ICs

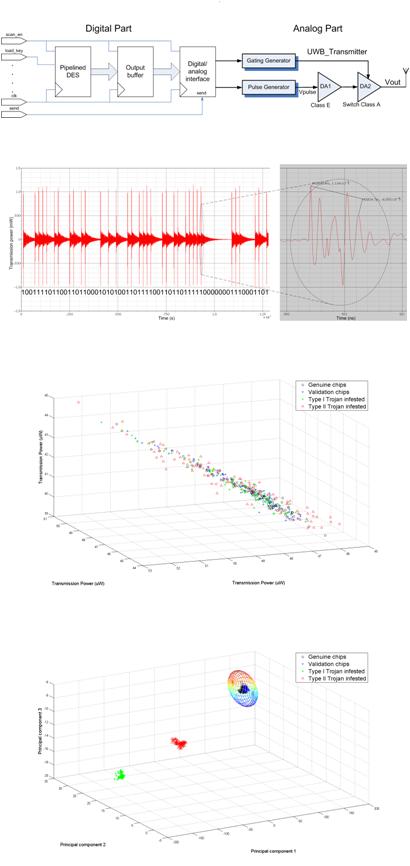

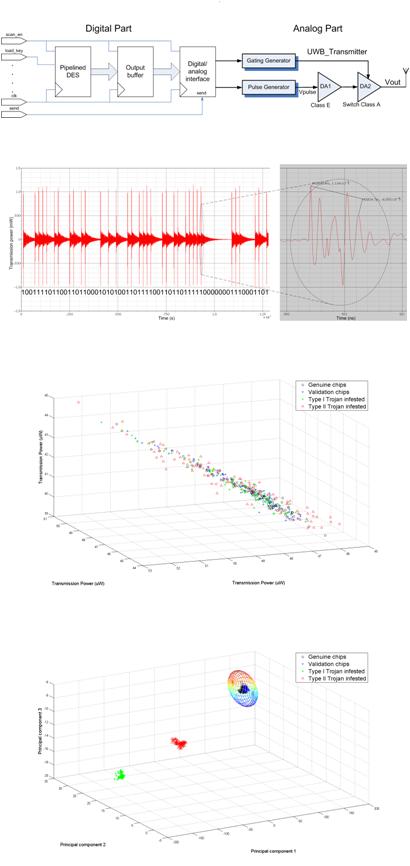

We contributed the first study of hardware Trojans in wireless cryptographic integrated circuits, wherein the objective is to leak secret data (i.e., the encryption key) through the wireless channel. Using a mixed-signal system-on-chip consisting of an AES encryption core and a UWB transmitter, we demonstrated two key findings. First, minute modifications suffice for hiding the leaked information in the transmission margins allowed for process variations and noise, without violating any digital, analog, or system level specifications of the wireless cryptographic IC and, hence, not being detectable by production test methods. Second, for the attacker to be able to discern the leaked information from the legitimate signal, effective hardware Trojans have to impose added structure to the transmission parameters to which the attacker has access (i.e., transmission power). While this structure is not known to the defender, statistical side-channel fingerprinting can reveal its existence and, thereby, expose the hardware Trojan. Recently, we contributed the first demonstration of these findings in actual silicon using Trojan-free and Trojan-infested versions of a wireless cryptographic IC which we designed and fabricated. Additionally, we introduced the first real-time method for detecting hardware Trojans which are dormant at test time and only become active after IC deployment, through the use of on-chip sensors and classifiers which monitor an invariant property and continuously evaluate whether the IC seizes to operate within a trusted region.

Counterfeit IC Identification

Another contemporary problem related to hardware security and trust, for which my group proposed an original solution based on machine learning, is that of counterfeit ICs . Specifically, we developed a method for distinguishing used/recycled ICs, which constitute the most common type of counterfeit ICs, from their brand new counterparts using Support Vector Machines (SVMs). We demonstrated that we can train a one-class SVM classifier using only a distribution of process variation-affected brand new devices to accurately tell the two classes apart based on simple parametric measurements. We showed the effectiveness of the proposed method using a set of actual microprocessor devices fabricated by Texas Instruments (TI) and subjected to extensive failure analysis burn-in test, in order to mimic the impact of aging degradation over time. Effectiveness in the analog domain as well as in detecting other types of counterfeit ICs, such as reverse-engineered, remarked, or off-specification has also been demonstrated.

Proof-Carrying Hardware Intellectual Property (PCHIP)

We introduced the use of Proof Carrying Code (PCC) principles in certifying security properties of third-party hardware intellectual property (IP). The use of third-party hardware IP, in the form of code in a Hardware Description Language (HDL), enables fast development of new electronic systems and is prevalent in both commercial and defense applications. To alleviate the security and trustworthiness concerns arising by third-party hardware IP we adapted the well-known PCC paradigm from the software community to enable formal yet computationally straightforward validation of security-related properties. These properties, agreed upon a priori by the IP vendor and consumer and codified in a temporal logic, outline the boundaries of trusted operation, without necessarily specifying the exact IP functionality. A formal proof is then crafted by the vendor and presented to the consumer, who can automatically validate compliance of the hardware IP to the agreed-upon security properties. Our initial proof-of-concept framework has been extended to support information flow tracking and reasoning at the microprocessor ISA level , and has gradually turned into an ecosystem for development of provably trustworthy hardware IP, including foundations, libraries, automation and examples on cryptographic hardware .

Applications of Machine Learning in Robust Design & Test of Analog/RF ICs

(Funded by NSF, SRC and an IBM/GRC Fellowship)

Throughout the design and production lifetime of an analog/RF integrated circuit, a wealth of data is collected for ensuring its robust and reliable operation. Ranging from design-time simulations to process characterization monitors on first silicon, and from high-volume specification tests to diagnostic measurements on chips returned from the field, the information inherent in this data is invaluable. Our research focuses on identifying meaningful data correlations using machine learning and statistical analysis, and applying them towards Reducing Test Cost through the use of classifiers, Enhancing Yield via post-manufacturing performance calibration, and On-Die Learning for Analog/RF BIST & Self-Calibration through the use of analog neural networks. Additionally, we are developing advanced statistical analysis methods for Process Variation Modeling and Yield Learning. We also developed and applied similar methods for Characterizing and Testing Nanodevices and we are investigating their utility in nano-scale architectures.

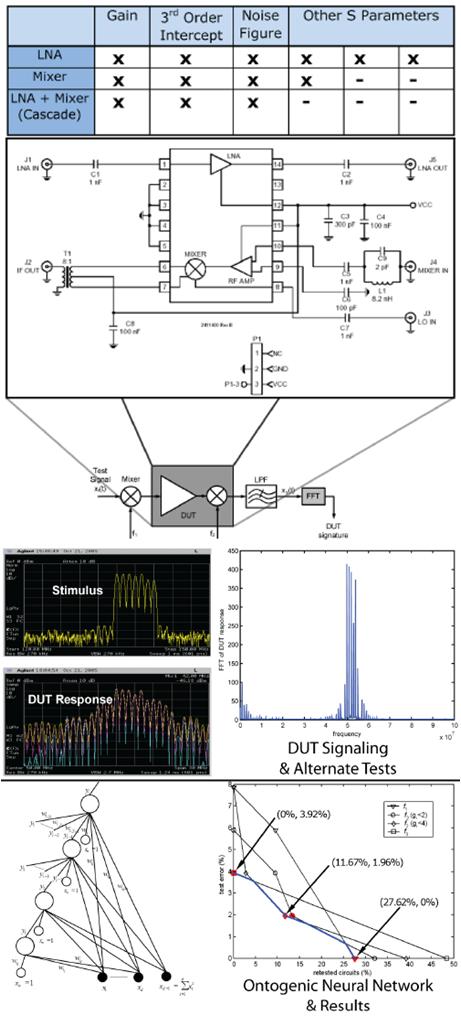

Machine Learning-Based Testing of Analog/RF ICs

While specification testing remains the only industrially practiced test method for analog/RF circuits, the instrumentation and time required for explicity measuring performances and comparing them to design specifications for each fabricated chip is no longer an economically viable option. Towards test cost reduction in analog/RF circuits, we developed a machine learning-based method which utilizes information learned from a small training set of fully tested analog/RF chips, in order to reduce test cost for the rest of the production. The fundamental advantage of our method is that it does not only predict the pass/fail labels of devices based on a set of low-cost measurements, but it also assesses the confidence in this prediction and retests devices for which this confidence is insufficient through traditional specification testing. Thus, this two-tier test approach sustains the high accuracy of specification testing while leveraging the low cost of machine learning-based testing. By varying the desired level of confidence, it also enables exploration of the trade-off between test cost and test accuracy and facilitates the development of cost-effective test plans. The effectiveness of this method has been demonstrated using industrial test data provided by Intel, IBM, and Texas Instruments, both in the context of specification test compaction, where a subset of existing performance measurements is dropped, and in the context of alternate testing, where simpler measurements replace the performance measurements alltogether.

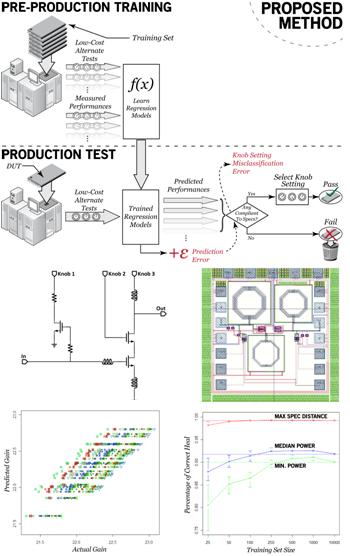

Post-Production Performance Calibration of Analog/RF ICs

In modern analog/RF device fabrication, circuits are typically designed conservatively to ensure high yield. Thus, analog designers often find themselves doubly constrained by performance and yield concerns. This has, in turn, sparked interest in analog/RF devices that are tunable after fabrication using "knobs"ť (post-production tunable components). By adjusting the knobs, some devices that would simply be discarded under the traditional analog/RF test regime can be tuned to meet specification limits and, thereby, improve yield. Our research focuses on understanding and systematically constraining the complexity of post-production performance calibration in analog/RF devices, in order to enable a cost-effective implementation. Moreover, we are developing a detailed cost model permitting direct comparison of performance calibration methods to industry-standard specification testing. The effectiveness of our one-shot calibration method has been demonstrated and compared to other methods using real measurements from a tunable RF LNA, which we designed and fabricated.

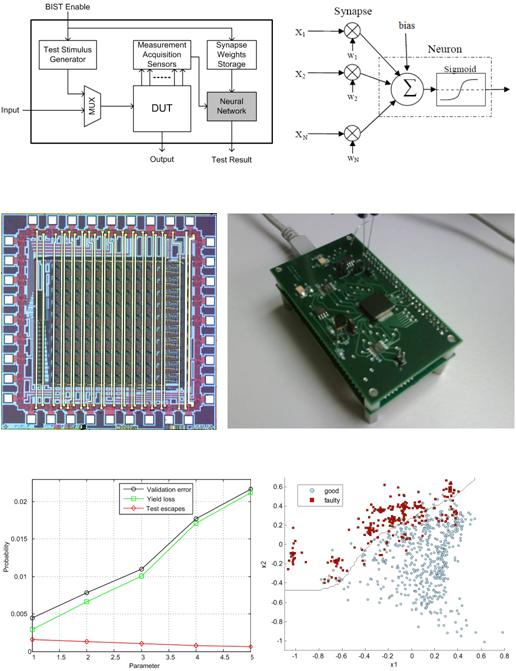

On-Die Learning for Analog/RF BIST & Self-Calibration

The success of neural networks in low-cost test and calibration of analog/RF ICs has prompted us to investigate the possibility of integrating them in hardware in order to enable self-test and self-calibration after deployment of analog/RF ICs in their field of operation. To this end, we designed and fabricated an analog neural network experimentation platform which supports various topologies and serves the purpose of prototyping solutions for on-chip integration. Our platform supports fast bidirectional dynamic weight updates for rapidly programming synapse weights during training, as well as non-volatile storage of the final weights through floating gate transistors (FGTs) operating as discretized analog memory. Our neural network has been put to the test both for self-test and for self-calibration purposes using measurements from an RF LNA. Results demonstrate that the hardware neural network has comparable learning capabilities with its software counterpart. Therefore, it provides accurate assessment of the operational health of an analog/RF IC and can tune it to adapt to its operating conditions.

Process Variation Modeling and Yield Learning

Semiconductor fabrication is an elaborate process involving a large number of steps, each of which can be considered as a source of variation. Understanding this variation and its impact on the quality of the fabricated devices and the yield of the process is particularly important. To this end, we developed a method for modeling performance correlation across a wafer via Gaussian processes and for optimizing and adapting these models to different spatial patterns. We then demonstrated effectiveness of wafer-level spatiotemporal correlation models, built through intelligent sampling, in predicting die performances in unobserved wafer locations. Additionally, we developed a method for decomposing process variation of a wafer to its spatial constituents in order to (i) identify the main contributor to yield variation, (ii) predict the actual yield of a wafer, and (iii) cluster wafers for production planning purposes. Finally, we demonstrated utility of wafer-level variation in predicting yield during migration of a product from one fabrication facility to another. The effectiveness of these models in understanding the process has been demonstrated on high-volume manufacturing data provided by TI.

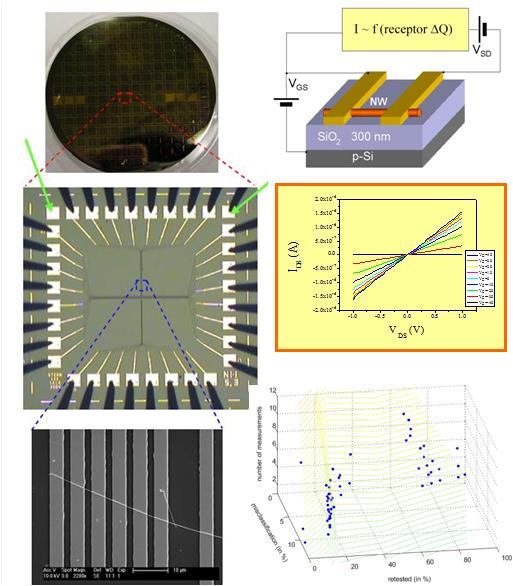

Statistical Nanodevice Characterization and Architectures

Nanofabrication causes disparities in the structure of nominally identical devices and, consequently, wide inter-device variance in their functionality. Therefore, nanodevice characterization and testing calls for a slow and tedious procedure involving a large number of measurements. As an alternative, we developed a statistical approach which learns measurement correlations from a small set of fully characterized nanodevices and utilizes them to simplify the process. We employ various machine-learning methods to predict the performances of a fabricated nanodevice, to decide whether it passes or fails a given set of specifications, and to bin it with regards to several sets of increasingly strict specifications. Our method was demonstrated using a set of fabricated nanowires within the context of nanowire-based chemical sensing.

Workload-Cognizant Reliable Design of Modern Microprocessors

(Funded by Intel Corp.)

Modern microprocessors exhibit an inherent effectiveness in suppressing a significant percentage of errors and preventing them from interfering with correct program execution (i.e. application-level masking). In essence, not all errors are equally important to the typical workload executed by a microprocessor. Therefore, a workload-cognizant understanding of the correlation between low-level errors and their Instruction-Level Impact is crucial towards developing cost-effective mitigation methods. Such Sensitivity Analysis information can prove immensely useful in allocating resources to enhance on-line testability and error resilience through concurrent error detection/correction methods. We are particularly interested in CED Methods for Microprocessor Control Logic, for which the solution space is not as well understood as for datapath components. Furtermore, we are also applying in workload-cognizant impact analysis to identify and deal with Performance Faults, i.e. faults that do not affect functional correctness but simply slow down program execution in modern microprocessors.

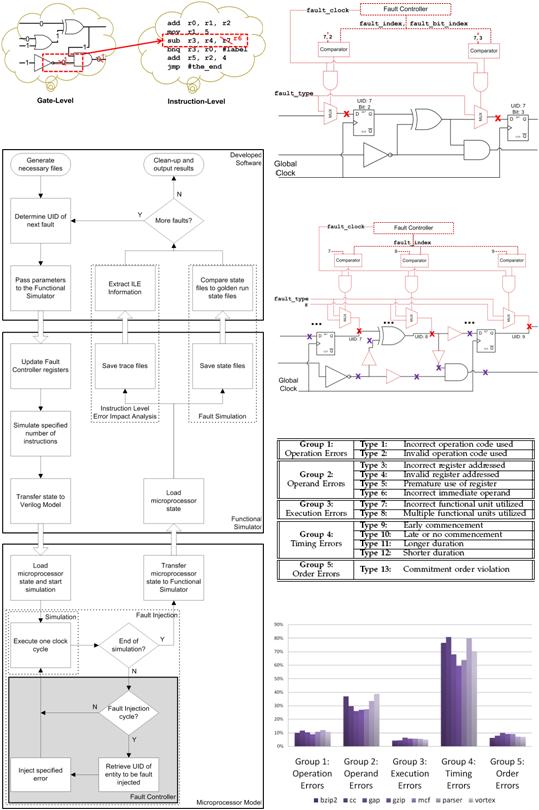

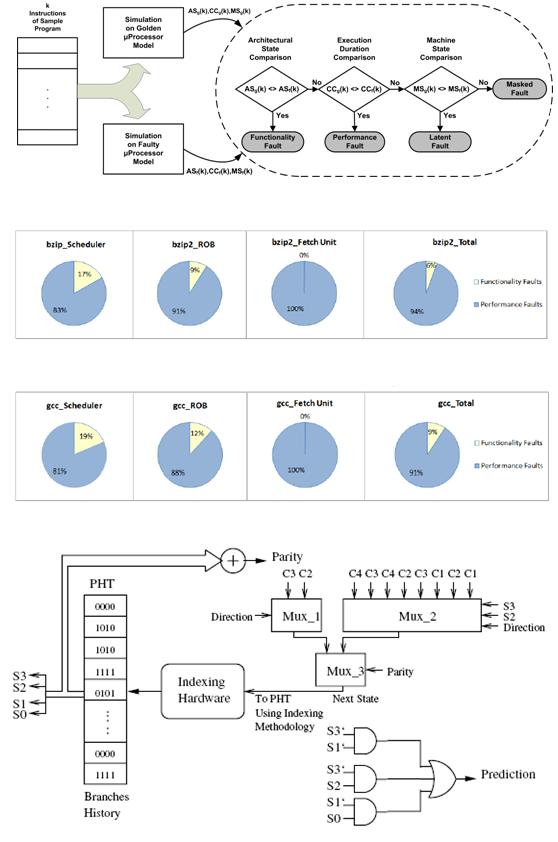

Instruction-Level Error Impact Analysis

We are interested in understanding the correlation between low-level faults in a modern microprocessor, and in particular in its control logic, and their instruction-level impact on the execution of typical workload. To this end, we developed an instruction-level error model and an extensive fault simulation infrastructure which allows injection of stuck-at faults and transient errors of arbitrary starting time and duration, as well as cost-effective simulation and classification of their repercussions into the various instruction-level error types. As a test vehicle for our study, we employ a superscalar, dynamically-scheduled, out-of-order, Alpha-like microprocessor, on which we execute SPEC2000 integer benchmarks. Extensive fault injection campaigns in control modules of this microprocessor have facilitated valuable observations regarding the distribution of low-level faults into the instruction-level error types that they cause. Experimentation with both register transfer level and gate level faults, as well as with both stuck-at faults and transient errors, confirms the validity and corroborates the utility of these observations.

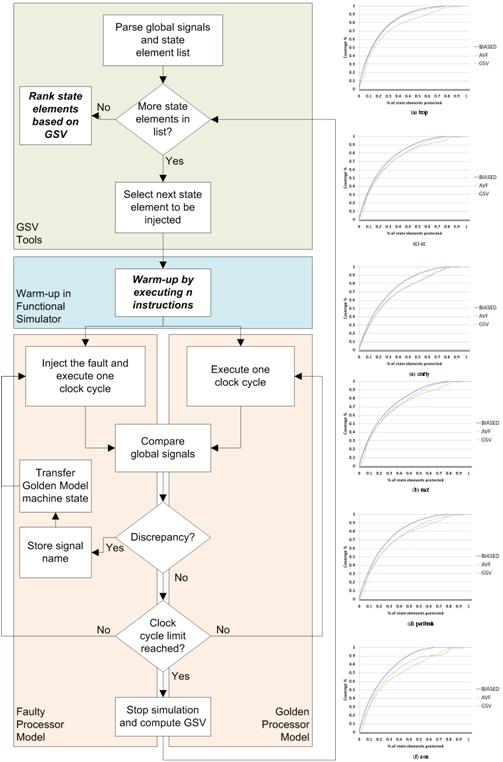

Soft Error Sensitivity Analysis

We developed a method for selective hardening of control state elements against soft errors in modern microprocessors. In order to effectively allocate resources, our method ranks control state elements based on their susceptibility, taking into account the high degree of architectural masking inherent in modern microprocessors. The novelty of our method lies in the way this ranking is computed. Unlike methods that compute the architectural vulnerability of registers based on high-level simulations on performance models, our method operates at the Register Transfer (RT-) Level and is, therefore, more accurate. In contrast to previous RT-Level methods, however, it does not rely on extensive transient fault injection campaigns, which may make such analysis prohibitive. Instead, it monitors the behavior of key global microprocessor signals in response to a progressive stuck-at fault injection method during partial workload execution. Experimentation with the Scheduler module of an Alpha-like microprocessor corroborates that our method generates a near-optimal ranking, yet is several orders of magnitude faster.

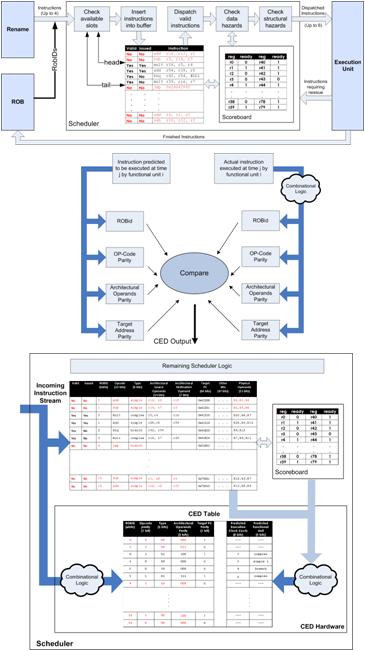

Concurrent Error Detection in Microprocessor Control Logic

We developed a method for concurrent error detection for the scheduler of a modern microprocessor. Our method is based on monitoring a set of invariances imposed through added hardware, violation of which signifies the occurrence of an error. The novelty of our solution stems from the workload-cognizant way in which these invariances are selected. Specifically, we make use of information regarding the type and frequency of errors affecting the typical workload of the microprocessor. Thereby, we identify the most susceptible aspects of instruction execution and we accordingly distribute CED resources to protect them. Our approach is demonstrated on the Scheduler of an Alpha-like superscalar microprocessor with dynamic scheduling, hybrid branch prediction and out-of-order execution capabilities. At a hardware cost of only 32% of the Scheduler, the corresponding CED scheme detects over 85% of its faults that affect the architectural state of the microprocessor. Furthermore, over 99.5% of these faults are detected before they corrupt the architectural state, while the average detection latency for the remaining faults is a few clock cycles, implying that efficient recovery methods can be developed.

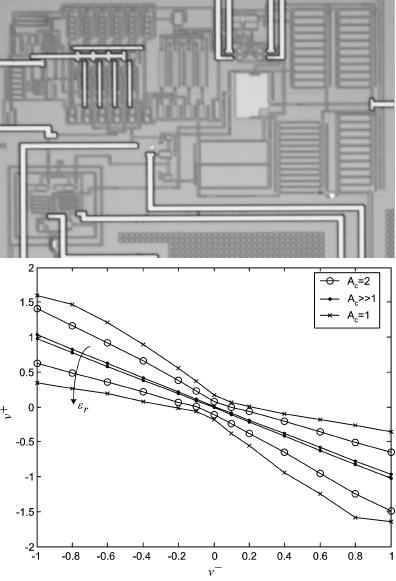

Performance Faults

Towards improving performance, modern microprocessors incorporate a variety of architectural features, such as branch prediction and speculative execution, which are not critical to the correctness of their operation. While faults in the corresponding hardware may not necessarily affect functional correctness, they may, nevertheless, adversely impact performance. We are investigating quantitatively the impact of performance faults using a superscalar, dynamically scheduled, out-of-order, Alpha-like microprocessor, on which we execute SPEC2000 integer benchmarks. Based on extensive fault simulation-based experimental results, we aim to utilize this information in order to guide the inclusion of additional hardware for performance loss recovery and yield enhancement. We have also specifically investigated the impact of errors in branch predictor units and we have developed a fault-tolerant design method which utilizes the Finite State Machine (FSM) structure of the Pattern History Table (PHT) and the set of faulty states to predict the branch direction.

Smart Observer Modules for Reliable Analog Circuits

(Funded by NSF)

Analog/RF designs constitute a rapidly increasing fraction of modern integrated circuits. This recent upsurge in interest owes itself to several factors, both technological and economical. From a technology perspective, silicon manufacturing has matured to the point where digital, analog, and even micro-electro-mechanical parts can be integrated on the same substrate. From an economical standpoint, the electronics market is experiencing an explosion of new applications where analog/RF circuits are essential, especially in wireless communications, real-time control, and multimedia. Many of these applications are mission-critical and pose demands for highly reliable circuits, yet very few error detection and correction solutions are available in the analog/RF domain. To this end, we developed Adaptive Analog Checkers for accurately comparing analog signals, as well as state estimators for performing Concurrent Error Detection in Linear Analog Circuits.

Adaptive Analog Checkers

In order to monitor the operational health of analog circuits through signal comparison, we developed the current state-of-the-art analog checkers. Unlike digital signals, where comparison is precise, in the analog domain this comparison needs to allow a tolerance window, wherein which the two signals are deemed equal, in order to account for process variations, noise and other non-idealities. Therefore, analog checkers examine two signals and decide whether their difference remains within this predefined tolerance window. Earlier solutions establish a statically defined window, which may be overly lenient for signals of small magnitude and overly strict for signals of large magnitude. The innovative feature of our checkers, which we designed both for differential and for duplicate signals, is that they dynamically adjust the width of the comparison window as a percentage of the compared signal magnitude, thereby making the comparison much more accurate reducing false alarms.

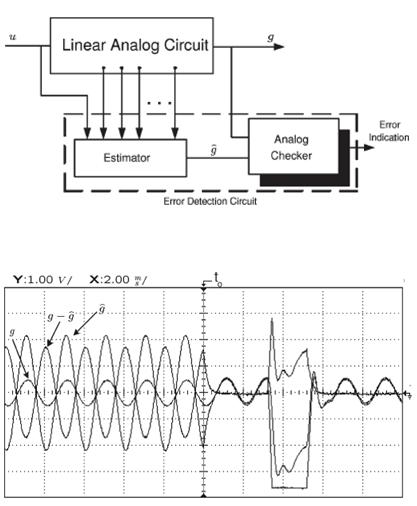

Concurrent Error Detection in Linear Analog Circuits

In order to perform CED in linear analog circuits, we developed a smart observer module that falls in the category of estimators. We developed a rigorous theory for designing an error detection circuit which monitors the inputs and a few judiciously selected internal nodes of a circuit and generates an estimate of its output. In error-free operation, this estimate converges to the actual output value in a time interval that can be made arbitrarily small. From then onwards, it follows the output exactly. In the presence of an error, however, the estimate deviates from the circuit output. Thus, CED is performed by comparison of the two signals through an analog checker, such as the ones discussed above. In essence, the error-detection circuit operates as a duplicate of the monitored circuit, yet it is much smaller that an actual duplicate.

Test and Reliability Solutions for Asynchronous Circuits

(Funded by DARPA/BOEING and a Yale Research Initiation Fund)

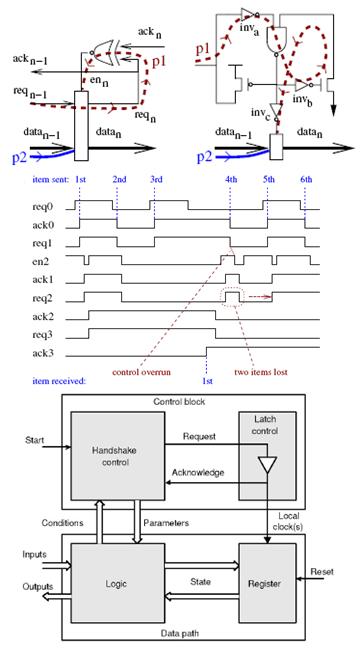

Rather than relying on a global synchronization mechanism, such as the clock signal used in synchronous circuits, asynchronous circuits establish communication through local hand-shaking protocols. Thereby, they promise several advantages, including modularity, improved performance, lower power consumption, higher robustness, lower electromagnetic interference, and elimination of clock distribution networks and clock skew problems. Αsynchrony has been recently revisited as a promising solution to many of the surfacing problems in the deep submicron regime, yet the pertinent CAD infrastructure is limited, especially regarding test and reliability. Our research focuses in developing Fault Simulation and Test Generation frameworks, as well as solutions for Soft Error Detection and Mitigation both for traditional and for contemporary classes of asynchronous circuits.

Fault Simulation and Test Generation

We introduced a 13-valued algebra, a time-stamping method for maintaining partial orders of causal signal transitions, and a judicious time-frame unfolding solution, based on which we developed an efficient logic and fault simulator for asynchronous circuits. The unique capabilities of this simulator are its ability to identify hazards and critical races, to which asynchronous circuits are sensitive, as well as its high logic simulation accuracy and, by extension, its fast and efficient fault simulation performance. Based on this simulator, we developed a complete test tool-suite for traditional classes of asynchronous circuits, such as Speed-Independent and Delay Insensitive, including fault simulation (SPIN-SIM), test generation (SPIN-TEST), and test compaction (SPIN-PAC). Moreover, we demonstrated the ability of our methods to effectively test stuck-at faults, delay faults and transistor-level faults in Ultra-High-Speed Asynchronous Micro-pipelines, wherein high performance is achieved through aggressive handshaking protocols, yet functional robustness relies on timing constraints which need to be tested. Separately, we have also developed a delay test generation method for Handshake circuits.

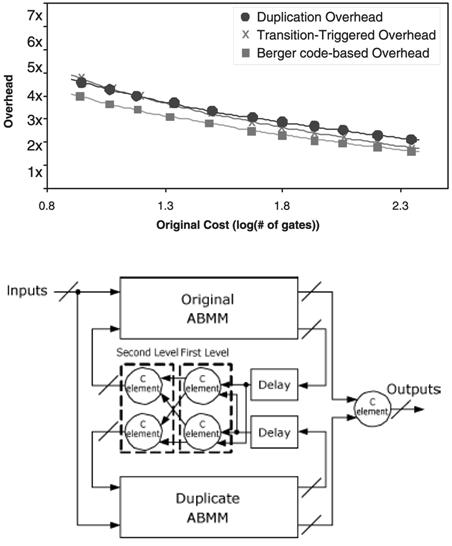

Soft Error Detection and Mitigation

We pinpointed the limitations of duplication-based CED in asynchronous circuits and we contributed the current state-of-the-art methods for concurrent error detection, error tolerance and error mitigation methods for Asynchronous Burst-Mode Machines (ABMMs). We devised two complete CED solutions for ABMMs, one intrusive (based on Berger codes) and one non-intrusive (based on Transition Triggering). We also proposed a soft error tolerance approach, which leverages the inherent functionality of Muller C-elements, along with a variant of duplication, to suppress all transient errors. Additionally, we introduced an error susceptibility analysis and error mitigation method for ABMMs, which enables exploration of the trade-off between the achieved error susceptibility reduction and the incurred area overhead.

Test and Reliability Solutions for Digital Circuits

(Funded by Intel, Micro and a Kuwait University Fellowship)

While test and reliability solutions for digital circuits are quite mature, the rapidly advancing technology continues to present challenging problems. Our research in this area has focused on two such problems. First, due to the size and complexity of modern designs, test methods can no longer handle them as monolithic entities. Therefore, we developed RTL Hierarchical Test Methods that address the problem in a divide-&-conquer manner. Second, current and near-future CMOS circuits exhibit an increased sensitivity to high-energy neutrons, protons, or alpha particles. When such elements strike a sensitive region in a semiconductor, they can generate a Single-Event Transient (SET) pulse, which may be misinterpreted by the circuit as a valid signal and result in an incorrect state and/or output (soft error). To address this problem, we developed Optimization Frameworks for Designing Reliable Circuits aiming to detect and mitigate soft errors, while taking account design constraints such as area, performance, and power consumption.

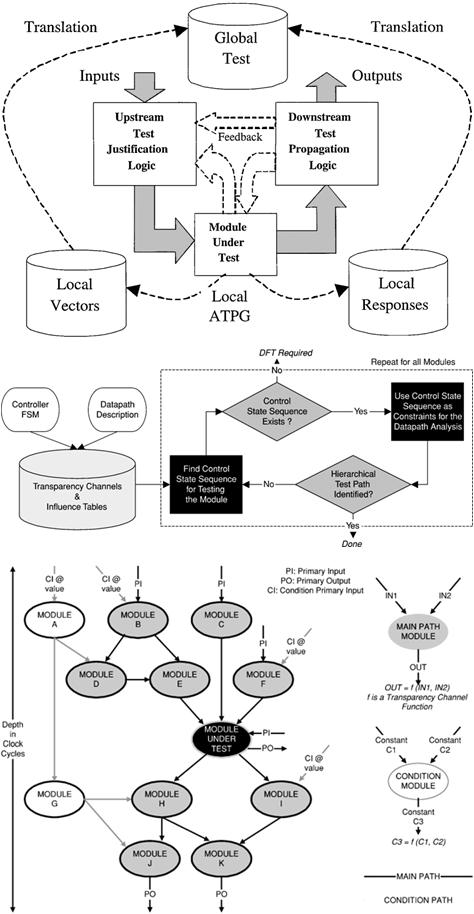

RTL Hierarchical Testability Analysis and Test Generation

In my doctoral research, I developed a hierarchical Register Transfer Level (RTL) testability analysis methodology based on the concept of modular transparency, i.e. the ability to isolate each module through reachability paths and treated as a stand-alone entity. The underlying principle is to exploit existing transparency functionality of the surrounding logic, in order to create these reachability paths. For the purpose of expressing transparency, I introduced the notion of transparency channels and a pertinenet transparency composition algebra. Unlike earlier definitions, where transparency is defined at fixed bitwidths, channels capture transparency in variable-bitwidth entities, thereby providing the ability to compose fine-grained hierarchical reachability paths. Performing such analysis at the RTL provides a hierarchical testability assessment early in the design cycle to guide DFT modifications or synthesis for hierarchical testability and support hierarchical test generation. These solutions were incorporated in TRANSPARENT (TRANSlation Path Analysis RENdering Test), a prototype tool which was transferred to Intel.Transparency paths have also been used for the purpose of design diagnosis as well as for for the purpose of on-line test and concurrent error detection based on the concept of inherent invariance.

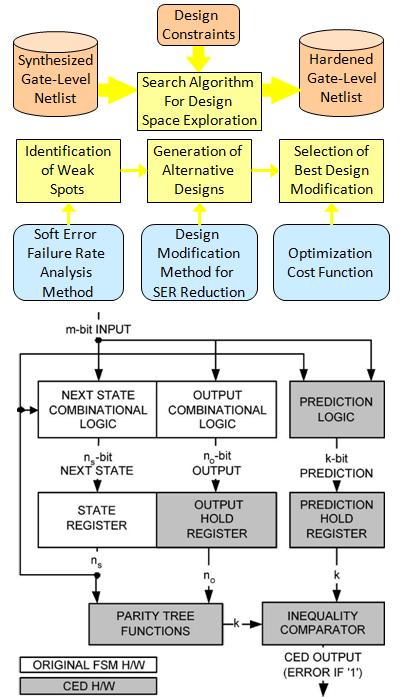

Optimization Frameworks for Designing Reliable Circuits

We developed two design-space exploration frameworks which take into account reliability parameters (e.g. error coverage, detection latency) and design constraints (e.g. area / performance overhead, power consumption), in order to evaluate alternative solutions. The first framework employs parity-based CED and uses the entropy of a function in order to explore the trade-off between error coverage and area/power overhead. Parity forest selection is formulated as an Integer Linear Programming (ILP) and is approximated via Randomized Rounding. The second framework employs rewiring (performed either based on ATPG or based on SPFD), which has been previously used for optimizing area, power consumption, delay, and testability of a circuit, in order to seamlessly integrate soft error mitigation solutions in the exploration of the design space. Alternatively, addition of functional redundancy can serve the same objective. We have also made several contributions in the area of concurrent error detection for Finite State Machines, based on convolutional codes, compaction and partial replication. Detection latency has also been integrated into this method.